this post was submitted on 14 Oct 2024

65 points (97.1% liked)

Climate - truthful information about climate, related activism and politics.

5140 readers

567 users here now

Discussion of climate, how it is changing, activism around that, the politics, and the energy systems change we need in order to stabilize things.

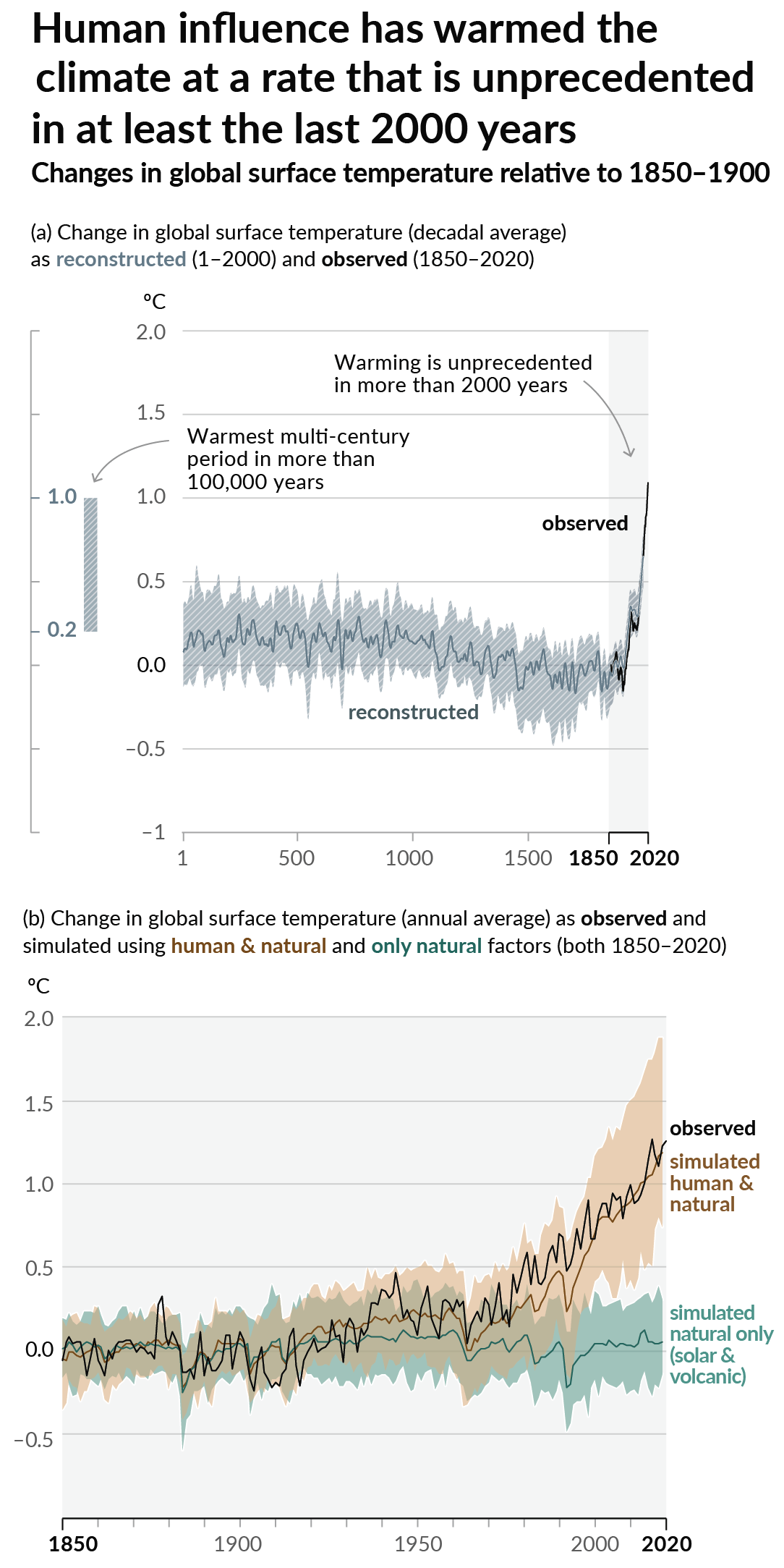

As a starting point, the burning of fossil fuels, and to a lesser extent deforestation and release of methane are responsible for the warming in recent decades:

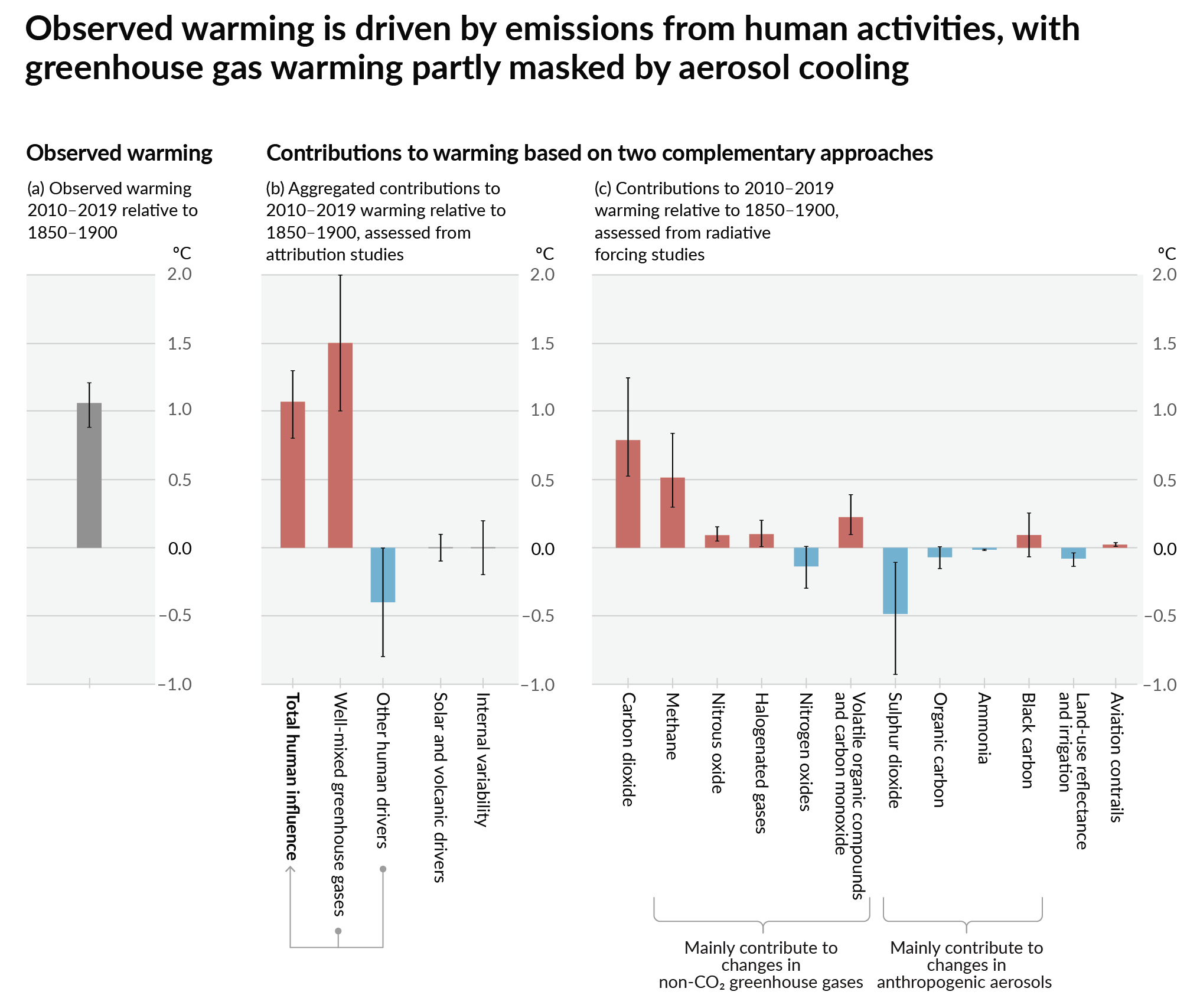

How much each change to the atmosphere has warmed the world:

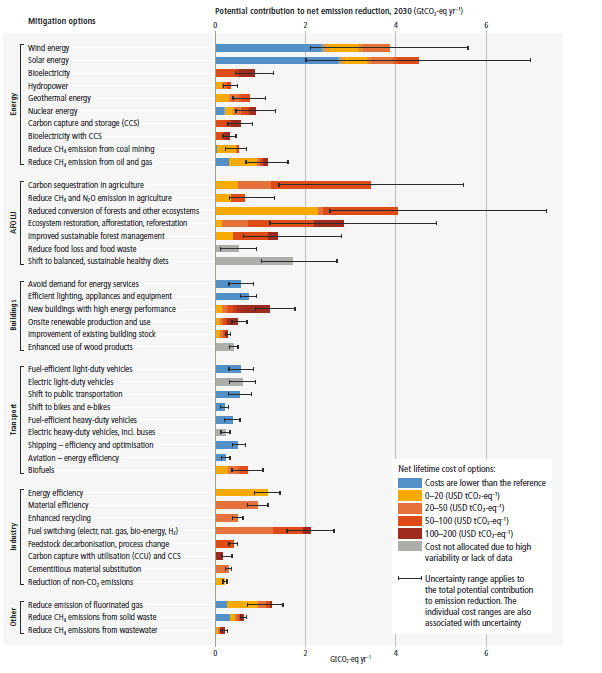

Recommended actions to cut greenhouse gas emissions in the near future:

Anti-science, inactivism, and unsupported conspiracy theories are not ok here.

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

Hi, it's me. I an beyond excited for what LLMs have achieved in just a few years, and use them daily. Why Google for info and deal with clickbait BS with novels of unrelated garbage when I can just get a straightforward answer from AI? It's far from perfect, but it makes my workday 10x easier.

Well for one, the clickbait BS has become worse because of the LLMs :P I think its cool tech, don't get me wrong. Before it started getting shoved into every product and before its environmental impact started skyrocketing, I was keenly interested in it. In all honesty, I still am, but I'm keenly interested in what happens with it after the bubble pops and the real use cases are left behind. Text summarisation and code prediction are two genuine use cases I've seen have tremendous success. But I don't need an LLM built into every product I use, and I don't need every product I use to be training LLMs.

I'm having a hard time understanding. Can you offer an example of a product with an LLM built in that serves no purpose?

I didn't say they don't serve a purpose, I said that customers didn't ask for it/don't want it. I also said AI, not LLM (initially).

They serve a purpose, but in many cases are not well suited or ready for production.

Examples?

I use a food delivery app that added an AI chatbot to supposedly help you with restaurant and food suggestions, and it wasn't able to handle simple dietary restrictions (whereas standard filtering options on the search work just fine). A prompt as simple as "I'm looking for restaurants with vegan sandwiches" would return places that had vegan food and/or sandwiches. Even if it worked properly, just having adequate filter options and robust search is far more suited to the kinds of things users are doing on this kind of app.

Search engines putting their in-house LLM generated slop above actual search results, which is the equivalent of ensuring that the first search result is from a garbage site with hit or miss accuracy of information or relevance to the topic. I don't imagine it will be long before we start to see generative ai image results being served on the fly by in-house products when you search for images. Pass the user query to an LLM to generate a prompt for image gen, then pass that to image gen and bam! Slop!

Every fucking product has some stupid gradient-coloured ✨ AI button shoved into it now and it drives me up the wall.

Putting LLM auto complete into IDEs? Great, as long as I can turn it off and as long as they're not training it on the code I write in the IDE. AI denoising in rendering software? Excellent. Image infill/outfill using AI in photo editing and drawing software? Spot on.