this post was submitted on 13 Jul 2025

647 points (97.0% liked)

Comic Strips

18843 readers

1352 users here now

Comic Strips is a community for those who love comic stories.

The rules are simple:

- The post can be a single image, an image gallery, or a link to a specific comic hosted on another site (the author's website, for instance).

- The comic must be a complete story.

- If it is an external link, it must be to a specific story, not to the root of the site.

- You may post comics from others or your own.

- If you are posting a comic of your own, a maximum of one per week is allowed (I know, your comics are great, but this rule helps avoid spam).

- The comic can be in any language, but if it's not in English, OP must include an English translation in the post's 'body' field (note: you don't need to select a specific language when posting a comic).

- Politeness.

- AI-generated comics aren't allowed.

- Adult content is not allowed. This community aims to be fun for people of all ages.

Web of links

- !linuxmemes@lemmy.world: "I use Arch btw"

- !memes@lemmy.world: memes (you don't say!)

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

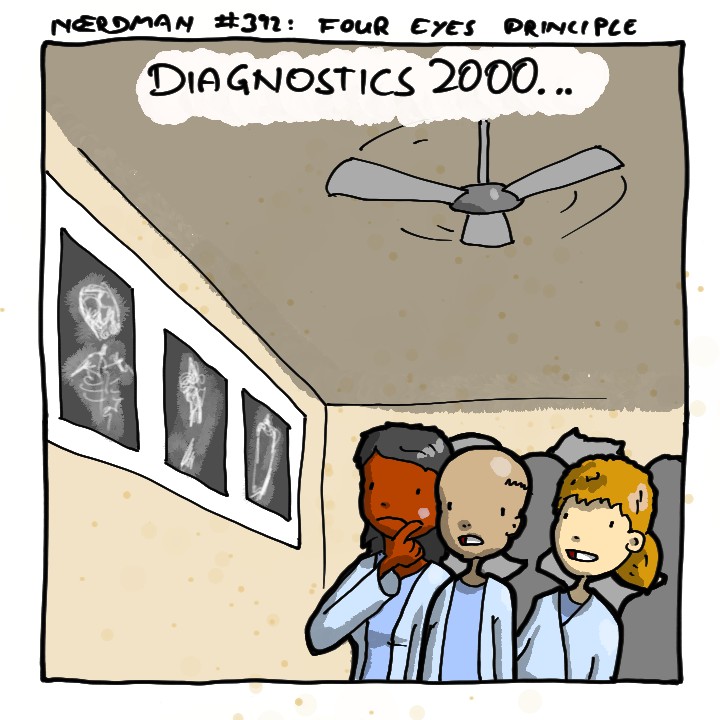

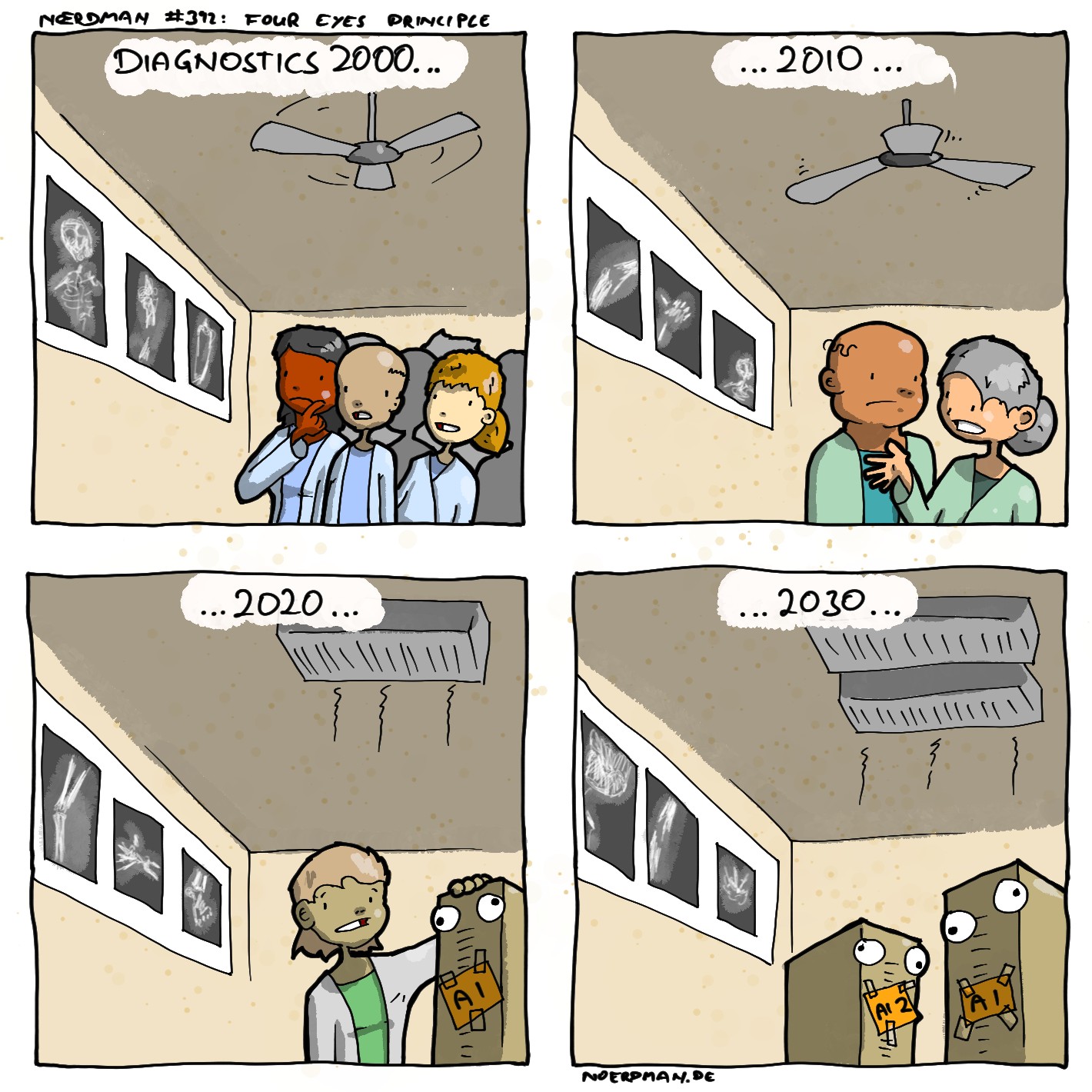

Neural networks work very similarly to human brains, so when somebody points out a problem with a NN, I immediately think about whether a human would do the same thing. A human could also easily fake expertise by looking at pen marks, for example.

And human brains themselves are also usually inscrutable. People generally come to conclusions without much conscious effort first. We call it "intuition", but it's really the brain subconsciously looking at the evidence and coming to a conclusion. Because it's subconscious, even the person who made the conclusion often can't truly explain themselves, and if they're forced to explain, they'll suddenly use their conscious mind with different criteria, but they'll basically always come to the same conclusion as their intuition due to confirmation bias.

But the point is that all of your listed complaints about neural networks are not exclusively problems of neural networks. They are also problems of human brains. And not just rare problems, but common problems.

Only a human who is very deliberate and conscious about their work doesn't fall into that category, but that limits the parts of your brain that you can use. And it also takes a lot longer and a lot of very deliberate training to be able to do that. Intuition is a very important part of our minds, and can be especially useful for very high level performance.

Modern neural networks have their training data manipulated and scrubbed to avoid issues like you brought up. It can be done by hand, for additional assurance, but it is also automatically done by the training software. If your training data is an image, the same image will be used repeatedly. For example, it will be used in its original format. It can be rotated and used. Cropped and used. Manipulated using standard algorithms and used. Or combinations of those things.

Pen marks wouldn't even be an issue today, because images generally start off digital, and those raw digital images can be used. Just like any other medical tool, it wouldn't be used unless it could be trusted. It will be trained and validated like any NN, and then random radiologists aren't just relying on it right after that. It is first used by expert radiologists simulating actual diagnosis who understand the system enough to report problems. There is no technological or practical reason to think that humans will always have better outcomes than even today's AI technology.

While the model of a unit in neural network is somewhat reminiscent of the very simplified behaviouristic model of a neuron, the idea that NN is similar to a brain is just plain wrong.

And I'm afraid, based on what you wrote, you didn't understand what this story means and why I told it.